The above method also makes your critical sections very short because all you do while the mutex is locked is std::list splicing, which is just a few pointer modifications.Īlternatively, just use concurrent_bounded_queue class from Intel® Threading Building Blocks. If ordering is required each element should have a timestamp, so that the consumer can sort all elements in its consolidated queue by time.When the consumer is ready, it locks those producer queues one by one and splices the elements into its own queue.Create a temporary std::list of one element, then lock the mutex, splice that element into the queue, unlock the mutex. Items are provided as promise-returning functions. A Locker is a queue where items in the queue can either require an exclusive or non-exclusive lock on some resource. Producers post items into its own queues. lock-queue.js Simple locking mechanism to serialize (queue) access to a resource Current status.You can eliminate the contention between producers by giving each producer its own queue (std::list + std::mutex or a spinlock). Producer threads contend with each other and with the consumer thread for access to the list.

Is it correct approach (to process list elements in efficient manner) ? Will it cause any trouble ? Void list_processing_thread() /* Processes elements from the list */įor (std::list::iterator it=mylist.begin() it!=mylist.end() ++it) Void add_thread(int element) /* Threads adding element to the list */ Once processing is over, processing thread again locks list deletes processed element and unlocks it.īelow is pseudo-code: std::list mylist /* Global list of integers */ Then I 'upgraded' the std::queue to boost::lockfree::spscqueue, so I could get rid of the mutexes protecting the queue. Using std::queue, the average was almost the same on both machines: 750 microseconds.

While processing in progress, other threads keep adding elements to the list. The times were measured on 2 machines: My local Ubuntu VM and a remote server. So processing thread locks list, gets element, unlocks list and processes the element. However, I don't want processing thread to lock the list all the time while processing is being done. Lock free code can be very tricky to write, so make sure you test your code well. Another thread get elements from list and processes them one by one. Avoiding locks makes the queue much faster. In my application threads keep adding elements to a global list. A spin lock must always be acquired or released either as a queued spin lock, or as an ordinary spin lock.I am aware that std::list is not thread safe.

For a description of when to use spin locks, see KeAcquireSpinLock.ĭrivers must not combine calls to KeAcquireSpinLock and KeAcquireInStackQueuedSpinLock on the same spin lock. Like ordinary spin locks, queued spin locks must only be used in very special circumstances. queue in The lock queue structure that represents the calling thread in the queue. Syntax void MSMPIQueuelockrelease( In MSMPILockqueue queue ) Parameters. For a driver that is running at IRQL = DISPATCH_LEVEL, this routine improves performance by acquiring the lock without first setting the IRQL to DISPATCH_LEVEL, which, in this case, would be a redundant operation. Releases the Microsoft MPI library global lock. Otherwise, use the KeAcquireInStackQueuedSpinLock routine to acquire the spin lock.įor a driver that is running at IRQL > DISPATCH_LEVEL, this routine acquires the lock without modifying the current IRQL. To release the spin lock, call the KeReleaseInStackQueuedSpinLockFromDpcLevel routine.ĭrivers that are already running at an IRQL >= DISPATCH_LEVEL can call this routine to acquire the queued spin lock more quickly. For more information, see Queued Spin Locks. Return valueįor a driver running at IRQL >= DISPATCH_LEVEL, KeAcquireInStackQueuedSpinLockAtDpcLevel acquires a spin lock as a queued spin lock.

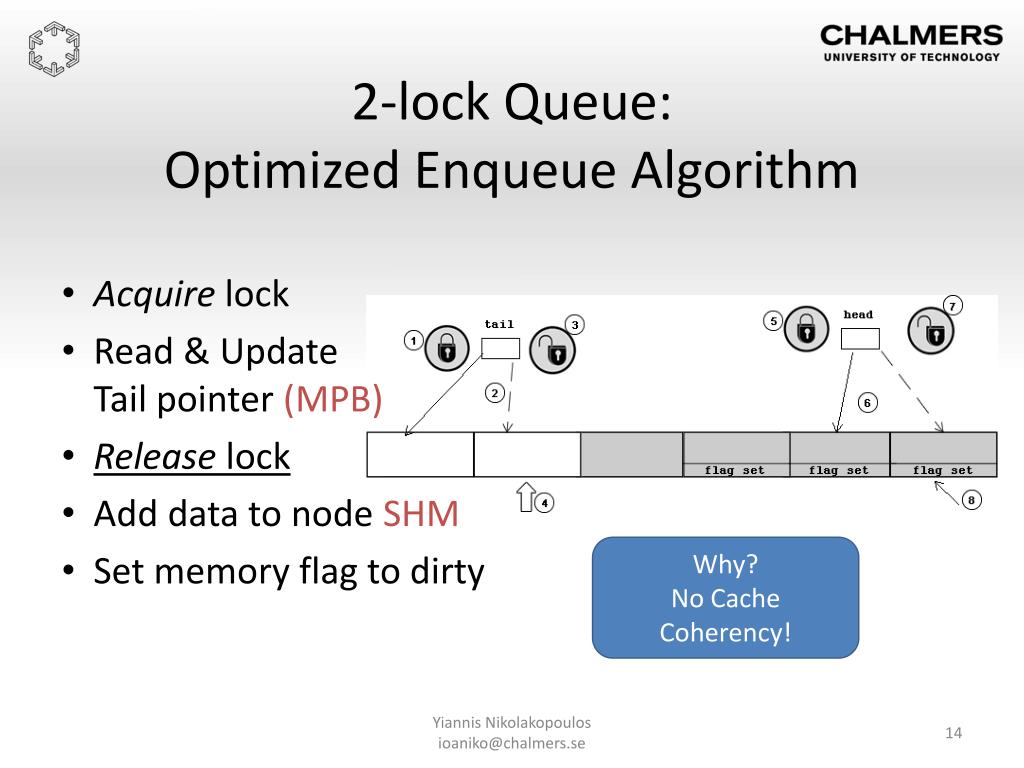

To release the lock, the caller passes this value to the KeReleaseInStackQueuedSpinLockFromDpcLevel routine. Lockless is about implementation - it means the algorithm does not use locks (or using the more. The essence of it for data structures is that if two threads/processes access the data structure and one of them dies, the other one is still guaranteed to complete the operation. Pointer to a caller-supplied KLOCK_QUEUE_HANDLE structure that the routine can use to return the spin lock queue handle. Lock-free is a more formal thing (look for lock-free algorithms). This parameter must have been initialized by a call to the KeInitializeSpinLock routine. Syntax void KeAcquireInStackQueuedSpinLockAtDpcLevel( Useful for multiprocessors without a universal atomic primitive. The KeAcquireInStackQueuedSpinLockAtDpcLevel routine acquires a queued spin lock when the caller is already running at IRQL >= DISPATCH_LEVEL. The two-lock concurrent queue algorithm performs well on dedicated multiprocessors under high contention.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed